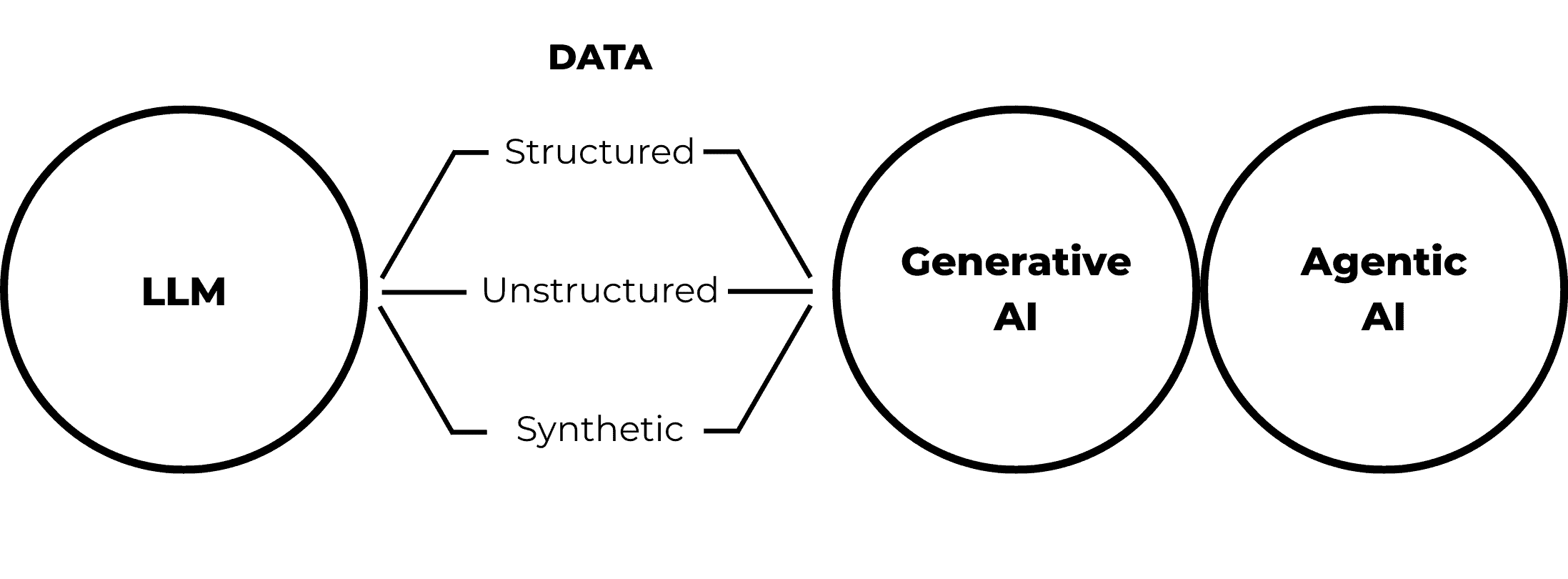

Generative and agentive artificial intelligence (AI) systems have become deeply embedded in enterprise workflows, creating a new and more complex data security reality. Large Language Models (LLMs) ingest, interpret, summarize, and act on massive volumes of both structured and unstructured data. While this unlocks tremendous value, it also redraws the boundaries of data ownership, control, and introduces new risk.

Understanding the fundamental concepts behind this innovative technology is critical to recognizing the risks and challenges of securing sensitive data in LLMs, especially as generative and agentive AI models introduce new complexities in protecting both structured and unstructured data across environments.

Fundamentals of Artificial Intelligence

While AI itself is not new, its rapid evolution has revealed new risks that many organizations are only beginning to recognize. To assess and mitigate these risks, it is important to understand the fundamentals of AI, including structured, unstructured, and synthetic data, LLMs, and generative and agentic models. Understanding what each concept represents and how they interact with each other enables us to recognize the risks, challenges, and opportunities we face in protecting sensitive data in today’s enterprise environments.

Structured vs. Unstructured Data

Structured data is the term used to define data that fits neatly into predefined formats such as rows and columns in databases or spreadsheets. Examples include tables and account records. Because of its established schema, structured data has traditionally been easier to classify, manage, and secure using proven tools to control access and visibility. In contrast, unstructured data does not follow a characteristic format. Examples include individual documents, images, emails and chat logs, and social posts among other forms.

While structured data used to account for most of the information used by enterprises, this has changed. Today, unstructured data has become the main resource. According to Gartner®, “unstructured data such as documents and multimedia files accounts for 70% to 90% of organizational data, and poses unique governance challenges due to its volume, variety, and lack of coherent structure. Making it AI ready is therefore challenging.”[1] And given its singularity, it is often necessary to classify, tag, and connect associated unstructured data sets before making them available to AI models.

Synthetic Data

Synthetic data is simulated data used for the purpose of training AI models. While maintaining the patterns exhibited by factual data in real world situations, synthetic data is anonymized and does not contain sensitive identifiable details that would compromise personal information. In the context of AI model training, the use of synthetic data provides a safe approach that has gained popularity.

LLMs

LLMs are defined by IBM as “giant statistical machines that repeatedly predict the next word in a sequence.”[2] As the term implies, LLMs are trained on sizable datasets that enable them to understand and generate human-like language. They are the engine under the hood of modern AI capabilities, able to interact with generative models in a conversational chat-like setting to produce business insight and ultimately enable agentive AI models to execute tasks on behalf of human users. A simple example of an LLM at work is the common word predictor that one sees when using an AI-assisted word processing program. Having been trained using vast amounts of text, the LLM can predict which word should come after another as the user is writing.

Generative AI

Generative AI refers to systems that can create new content, whether it is text, images, or code; added content is created based on a pattern learned through training. Instead of simply retrieving information, generative AI models create new output based on what they find from a combination of available data sources (structured or unstructured, real, or synthetic) through an exchange (chat) with the human user.

Agentive AI

Agentive AI goes a step further. Once new output is generated, these models can then autonomously make decisions and execute actions based on the generated findings and insight. Systems that monitor user behavior and automatically block access and issue an alert when a deviation is detected act as agents of the users.

Looking at these foundational concepts from the bottom-up: agentive AI models act on the insight produced by generative models after the LLM transforms real or synthetic structured and unstructured data at its disposal. By transforming LLMs from passive tools into active components in a workflow, the power of AI is dramatically increased, but so are associated security implications.

New Risks: Increase Data Exposure and Poisoning

The risks associated with AI are growing rapidly. Gartner® predicts that, “by 2028, 25% of enterprise breaches will be traced back to AI agent abuse, from both external and malicious internal actors.”[3] At the ingress of these models, unintended exposure of sensitive, often personally identifiable input data, as well as deliberate manipulating or poisoning of the training sets, account for high-risk areas of concern.

Data Exposure

LLMs can inadvertently expose sensitive data during training by creating inferences and associations that end up revealing sensitive details. For such reason confidential details and identifiers should always be removed from training sets. Examples of indirect disclosure by inference are not hard to find. Carefully anonymized data sets can end up being re-identified by simple association. Using an LLM trained with data previously anonymized can enable re-identification, particularly in small sample populations after querying the LLM across a combination of attributes. Another aspect to consider is the loss of visibility. Unlike traditional applications, LLMs employ probabilistic models to learn and develop insight, and therefore there are no clear audit trails that show what data was used, how it influenced the output, and where sensitive information may have been exposed.

Data Poisoning

LLMs rely on the integrity of the training data set to ensure the correct functionality of generative and agentive models. Deliberate manipulation and/or corruption of the training data can lead to misclassification and malfunction, which can have serious repercussions. Data poisoning attacks can be targeted through prompt injection on generative AI chats to alter responses, or they can be nontargeted, where the attack focuses on weakening the AIs ability to process data by creating conflicting scenarios and confusing situations for the LLM.

Challenges: Securing Data from and with AI

Protecting Data from LLMs

As stated in the above risks, preventing LLMs from ingesting or accessing sensitive data becomes a critical challenge. Best practices to mitigate this include:

- Data minimization: Expose only the least amount of data required for any given task and use synthetic data whenever possible to limit potential compromises.

- Tokenization or masking: Replace or hide all sensitive fields presented to LLMs.

- Zero-trust interactions: Treat LLMs as semi-trusted services with scoped access.

An example of this challenge would involve replacing real account numbers with tokens before a prompt reaches an LLM and only detokenizing the results. This would enable LLMs to be trained without having to use sensitive data which could inadvertently be revealed in the process. The LLM would be able to generate new insight which would have its own security implications.

Using AI as a Data Security Enabler

AI can also be a powerful defensive tool, allowing for sensitive data to be discovered, classified, and protected following specific set procedures. Typical examples where AI-driven processes are used for this purpose include:

- Sensitive data discovery: AI classifies sensitive data in structured and unstructured environments based on the context and not just on established training patterns.

- Behavioral analytics: AI identifies atypical user behavior to expose insider threats.

- Adaptive access control: AI dynamically adjusts privileges of users (human and agentive machines) based on risk profile, behavior, and context.

An example of this challenge would involve an AI system detecting an unusual query pattern from an AI agent and automatically restricting its access to certain data sets.

A Balancing Act

Given these challenges, organizations today must safeguard sensitive data from AI while at the same time using AI to better protect the enterprise and its sensitive data assets. Achieving this balance requires a carefully crafted data security policy-driven architectures, one that abstract security controls away from the individual applications and that can enforce them consistently across the business environment.

The Way Forward

Depending on the extent of AI adoption across an organization, the technology will unquestionably increase data security threats by accelerating access, amplifying the inference it can make across data sets, and enabling autonomous actions. At the same time though, it offers an unprecedented capability to map relationships and figure out patterns at scale. To better manage risks, AI models detect anomalies and insider threats and can enable continuous classification and tagging of data to enable data protection mechanisms and enforce least-privilege access controls dynamically, while automatically generating detailed audit reports.

AI & Data Security Best Practices

AI is here, and organizations must adapt or lose out to those who wisely employ the technology to advance their capabilities, their performance, and competitiveness. To move forward responsibly, enterprises should focus on implementing best practices including:

- Abstracting data protection capabilities from applications: Enforcing security through centralized, policy-driven services.

- Protecting data before it enters AI workflow environment: Encrypting, tokenizing, or masking sensitive data to enable a complete and safe AI training program.

- Limiting AI access through scoping: Never giving LLMs unrestricted data visibility.

- Maintaining auditability: Knowing where data comes from and how it is used.

- Keeping humans in the loop: As agentive AI models take autonomous actions, this becomes critically important for irreversible and high-impact processes.

Leading with Prime Factors

Prime Factors has been a leader in data protection for over forty years, delivering comprehensive, data-centric cryptographic solutions that help organizations secure critical data across applications and environments. Built with flexibility and crypto-agility at its core, Prime Factors’ EncryptRIGHT application-level data protection platform abstracts security functions from applications, enabling centralized policy management with distributed local enforcement. This approach allows organizations to protect sensitive data everywhere it is used, moved, or stored, including in AI-driven workflows, without re-architecting applications or disrupting business operations.

As AI reshapes how data is accessed, shared, and used, Prime Factors is ready to help enterprises reassert control, reduce risk, and build trust. To learn more about application-level data protection and how EncryptRIGHT can support your critical data assets and crypto-agility journey, request your free trial today.

[1] Gartner: Governing Unstructured Data for AI Readiness: A Strategic Roadmap, By Melody Chien.

14 Aug 2025 Gartner is a trademark of Gartner, Inc. and/or its affiliates.

[2] IBM Think: What are Large Language Models (LLMs)? – Retrieved 6 Feb 2025, Cole Stryker.

[3] Gartner, Investor Opportunities in IAM for AI Agents, By Tarun Rohilla, Frank Marsala, 21 Nov 2025, Gartner is a trademark of Gartner, Inc. and/or its affiliates.